4 Reasons Mobile Construction Robots Are a Contractor's Best Friend

The Role of Inertial Measurement Unit (IMU) in 3D Time-of-Flight Cameras

Why Do Hardware Startups Fail?

Reliable Robotics Demonstrates Flexibility of Autonomous Flight System in an Operational Mission for the U.S. Air Force

Avangrid Pilots Mobile Robot Dog to Advance Substation Inspections with Artificial Intelligence

Anyware Robotics Emerges from Stealth Mode to Reveal Its Pixmo Robots for Container and Truck Unloading

Addressing the Skills Gap in Manufacturing through Robotics Training

Exploring 3D Trends in Machine Vision Industries: Tomorrow's Vision Today

Women in Engineering: Embracing Diversity & Encouraging Passion to Optimize Outcomes

Amazon and iRobot agree to terminate pending acquisition

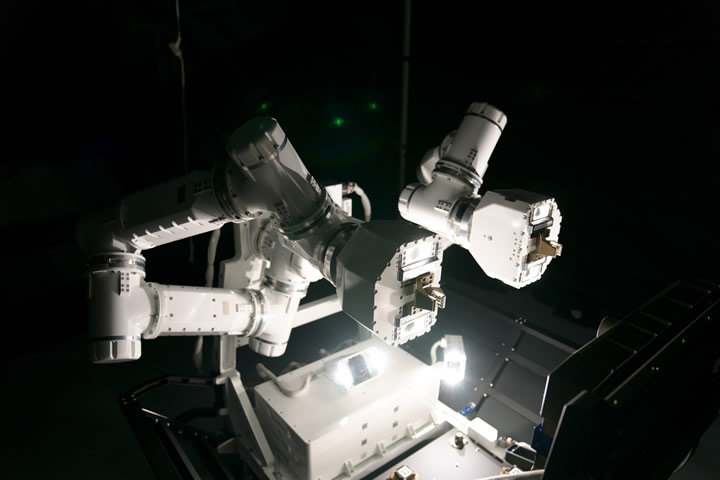

GITAI Autonomous Robotic Arm Set to Launch on Jan. 29 to International Space Station

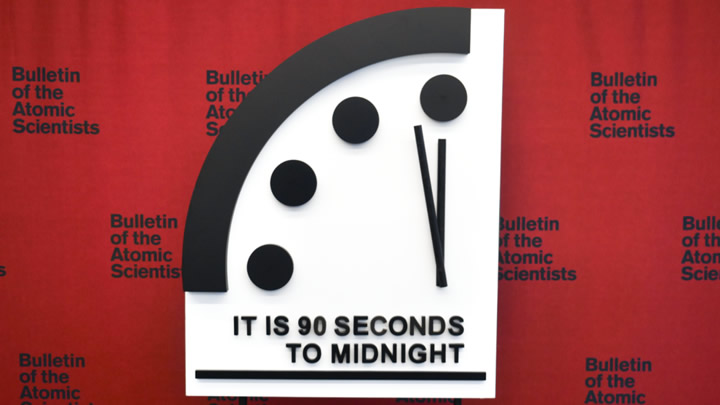

DOOMSDAY CLOCK REMAINS AT 90 SECONDS TO MIDNIGHT

CES 2024 Spotlight: Kepler's Humanoid Robot Launch Gains International Recognition

Study: Robots and AI Offer Significant Carbon Emissions Reductions by Operating Centuries Old Industries Better

Exploring the Future of Machined Exoskeleton Technology in Medical Rehabilitation

Records 16 to 30 of 1079

First | Previous | Next | Last

Featured Product

.jpg)