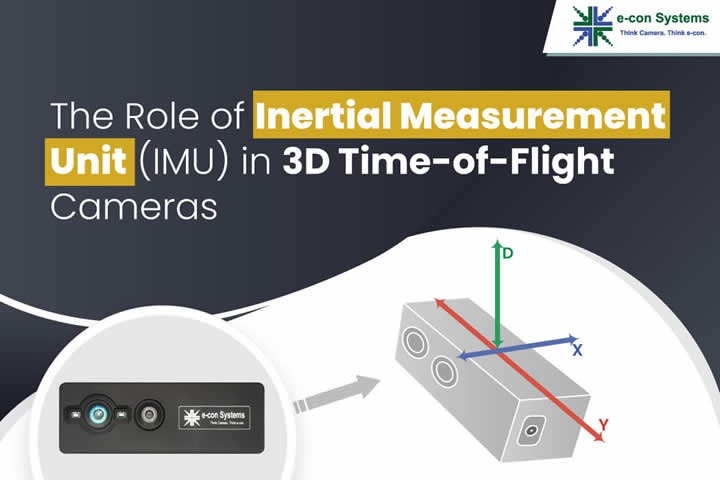

An Inertial Measurement Unit (IMU) detects movements and rotations across six degrees of freedom, representing the types of motion a system can experience.

Prabu Kumar | e-con Systems

Robotic navigation has progressed big-time with the evolution of sensors based on LiDAR and radar technologies. However, these sensors are not exhaustive in their data capture. An Inertial Measurement Unit (IMU) becomes crucial to bridge this data gap. The IMU provides detailed motion dynamics, complementing the information derived from conventional sensors. Especially when paired with 3D Time-of-Flight cameras, the IMU ensures a more accurate spatial understanding, thereby increasing the dependability of robotic navigation systems.

In this blog, you’ll unearth insights into the significance of IMU, especially in redefining the capabilities of 3D ToF cameras.

What is an Inertial Measurement Unit (IMU)?

An IMU is fundamentally designed to detect movements and rotations across six degrees of freedom. A degree of freedom (DoF) refers to the number of independent ways an object can move within a space. In simpler terms, it represents the distinct types of motion a system can experience.

Basically, it meticulously detects movement and rotation across three axes – encompassing the critical parameters of pitch, yaw, and roll. Essentially, it offers a comprehensive 3-dimensional understanding of an object’s spatial orientation and movement. The utility of the IMU feature goes beyond just measurements, as it connects the virtual and physical worlds by providing real-time data.

Benefits of the Inertial Measurement Unit (IMU)

- Enhanced depth awareness: The dynamic nature of camera operations means they’re always in motion. The addition of an IMU feature significantly amplifies a camera’s ability to gauge depth, even as it operates dynamically. The continuous refinement of depth perception, irrespective of camera motion, ensures that data, whether captured from static or moving viewpoints, retains its integrity and accuracy.

- SLAM and tracking: The IMU feature makes advanced operations like Simultaneous Localization and Mapping (SLAM) a reality. They enhance point-cloud alignment while boosting the system’s awareness of its environment, leading to efficient mapping and tracking. This can drastically improve how systems map, understand, and interact with their surroundings.

- Easy registration and calibration: Calibration, which used to be a time-intensive task, especially for handheld scanning systems, now becomes more user-friendly. An IMU feature ensures that, during calibration, every nuance is captured and adjusted for, guaranteeing precision in the final output. As devices with imaging capabilities, such as drones and augmented/virtual reality systems, become more prevalent in a multitude of industries, the role of IMUs in ensuring consistent, high-quality results becomes more vital.

How integrated IMUs help 3D cameras see better in different lighting conditions

Enhanced tracking and mapping: The system gains an edge in spatial understanding by combining IMU data with 3D camera data. This fusion is crucial, especially when shadows, bright spots, or transitional areas pose a challenge. The IMU keeps track of its relative orientation and position as the camera moves. Consequently, even if the visual feed is disrupted or confused by lighting variations, the integrated data helps maintain an accurate spatial representation.

Camera stabilization: The role of IMUs in stabilizing a 3D camera’s output actively analyzes motion patterns to predict and compensate for unwanted shifts. It means that even when an external force, sudden movement, or change in light causes the camera to shift, the IMU data assists in negating that effect. As a result, users receive a stable and clear output that isn’t adversely affected by abrupt lighting changes or physical disruptions.

Reduced latency: Fast data processing is vital in many applications, especially in real-time scenarios. An IMU integrated with a 3D camera continuously feeds motion data, allowing for quicker visual adjustments. In situations where rapid changes in lighting occur, such as moving from a dim corridor to a brightly lit room, the system can swiftly adjust, ensuring that the user experiences minimal visual lag or disruption, which is crucial for applications like telepresence.

Energy efficiency: First, they operate using minimal power, reducing the system’s overall energy footprint. Second, combined with a 3D camera, they enable the camera to rely less on computationally intensive tasks, like continuously recalibrating in fluctuating light. This means the camera can delegate some responsibilities to the IMU, reducing its workload and, subsequently, its power consumption, which is beneficial for prolonging operational time.

Increased versatility: Whether navigating through foggy environments, transitioning between indoor and outdoor settings, or compensating for flickering artificial lights, the integrated system ensures stable performance. By syncing data from both sources, the camera can effectively interpret its surroundings and adjust its operations, ensuring that varying lighting conditions don’t compromise efficiency or output quality.

Low-light Performance: The challenge of low-light performance isn’t just about capturing data but ensuring that this data is consistent and reliable. As the 3D camera with its global shutter works to capture depth data in dimly lit scenarios, the IMU tracks any minor movements of the camera. This ensures that even in low-light, the depth data collected is spatially accurate. For devices like drones or autonomous robots that may operate in varying light conditions, the combination of a 3D camera’s low-light performance and an IMU’s motion tracking offers unmatched consistency and reliability in data capture.

Top applications that need an integrated IMU-3D camera solution

Robotic navigation: Combining a broad field of view with a global shutter sensor makes these cameras ideal for sophisticated applications like robotic navigation. An IMU feature ensures accurate and swift data collection and interpretation in scenarios where millisecond decisions make a difference, like avoiding obstacles or following a precise path.

AR & VR applications: While the IMU provides precise motion tracking and orientation data in real-time, the 3D camera captures depth information, enabling a more spatially aware representation of the environment. Together, they bridge the gap between the virtual and physical worlds, allowing for more realistic interactions and smooth blending of augmented objects with real surroundings.

Gesture/motion control for telepresence robots: The IMU offers accurate motion data, ensuring the robot’s movements are seamless and aligned with user commands. Simultaneously, the 3D camera interprets depth and spatial relationships, enabling the robot to accurately recognize and respond to human gestures. This ensures that telepresence robots can engage in more intuitive and human-like interactions.

e-con Systems’ 3D ToF USB camera now has the IMU feature

e-con Systems, in its relentless pursuit of innovation, has integrated the IMU feature into DepthVista_USB_RGBIRD – our state-of-the-art 3D ToF USB camera. It combines an AR0234 color global shutter sensor from onsemi® with a 3D depth sensor, which streams depth data at a high quality of 640 x 480 @ 30 frames per second. So, it’s a single camera for object recognition and obstacle detection. Also, this 3D ToF camera can function in complete darkness and uses a VCSEL of 850nm, which is safer for human eyes.

The IMU feature upgrade is supported by a detailed source code – as part of the brand-new SDK package release for DepthVista_USB_RGBIRD – and Python compatibility.

So, it’s an ideal choice for product developers leveraging out-of-the-box capabilities to build next-generation embedded vision devices.

Read more about DepthVista_USB_RGBIRD

Please visit the Camera Selector page to explore e-con Systems’ camera portfolio.

If you need help integrating 3D ToF cameras with IMU capabilities into your embedded vision product, don’t hesitate to contact us at camerasolutions@e-consystems.com.

Prabu Kumar is the Chief Technology Officer and Head of Camera Products at e-con Systems, and comes with a rich experience of more than 15 years in the embedded vision space. He brings to the table a deep knowledge in USB cameras, embedded vision cameras, vision algorithms and FPGAs. He has built 50+ camera solutions spanning various domains such as medical, industrial, agriculture, retail, biometrics, and more. He also comes with expertise in device driver development and BSP development. Currently, Prabu’s focus is to build smart camera solutions that power new age AI based applications.

Prabu Kumar is the Chief Technology Officer and Head of Camera Products at e-con Systems, and comes with a rich experience of more than 15 years in the embedded vision space. He brings to the table a deep knowledge in USB cameras, embedded vision cameras, vision algorithms and FPGAs. He has built 50+ camera solutions spanning various domains such as medical, industrial, agriculture, retail, biometrics, and more. He also comes with expertise in device driver development and BSP development. Currently, Prabu’s focus is to build smart camera solutions that power new age AI based applications.

The content & opinions in this article are the author’s and do not necessarily represent the views of RoboticsTomorrow

Comments (0)

This post does not have any comments. Be the first to leave a comment below.

Featured Product