One person can supervise 'swarm' of 100 unmanned autonomous vehicles, OSU research shows

Addressing the Skills Gap in Manufacturing through Robotics Training

Amazon and iRobot agree to terminate pending acquisition

How to Handle Post-Holiday Returns with Warehouse Automation

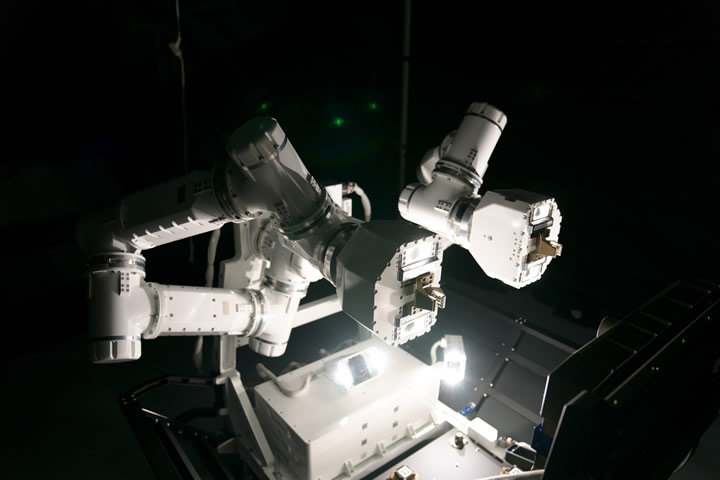

GITAI Autonomous Robotic Arm Set to Launch on Jan. 29 to International Space Station

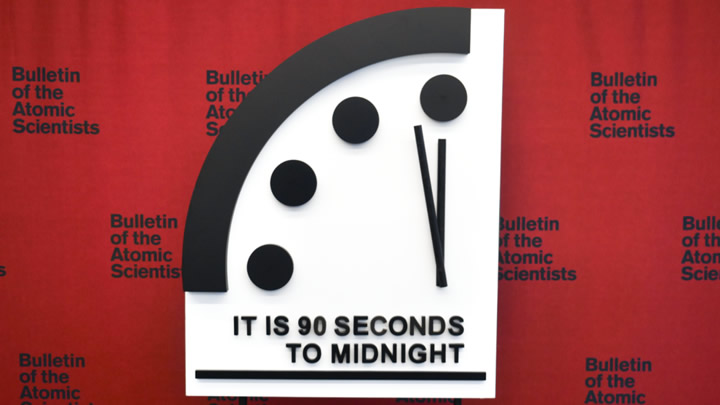

DOOMSDAY CLOCK REMAINS AT 90 SECONDS TO MIDNIGHT

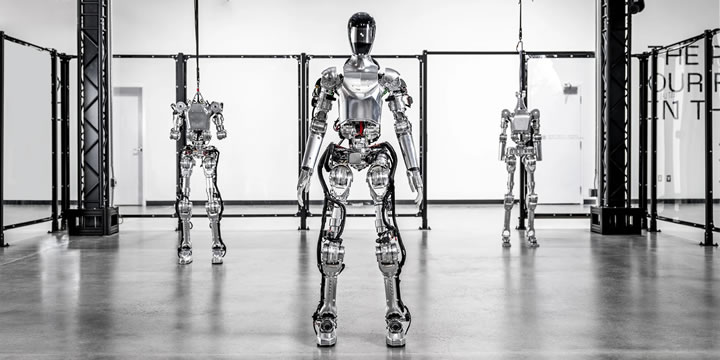

CES 2024 Spotlight: Kepler's Humanoid Robot Launch Gains International Recognition

Figure announces commercial agreement with BMW Manufacturing to bring general purpose robots into automotive production

Study: Robots and AI Offer Significant Carbon Emissions Reductions by Operating Centuries Old Industries Better

Following the Prompts: Generative AI Powers Smarter Robots With NVIDIA Isaac Platform

DJI's First Delivery Drone Takes Flight Globally

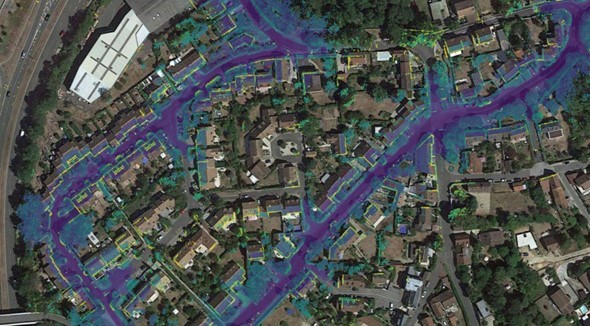

CES 2024: Exwayz unveils Exwayz 3D Mapping, the first city-scale mapping software to allow robot navigation in limitless environments

The Role of Robotics in Agrovoltaics: 5 Interesting Developments

H2 Clipper Achieves Major Advanced "Swarm Robotics" Breakthrough For Large Scale Airship Manufacturing

Why Vision-Guided Systems Elevate Assembly Line Precision

Records 31 to 45 of 1462

First | Previous | Next | Last

Featured Product

.jpg)