Clevon's T-Mobile Powered Autonomous Delivery Robot Fleet Zooms Into Smart City Peachtree Corners

AI and AM: A Powerful Synergy

Security Must Be Addressed Before Autonomous Delivery Can Thrive

Meet the Maker: Developer Taps NVIDIA Jetson as Force Behind AI-Powered Pit Droid

Sarcos and Blattner Company Sign Agreement for Development of Autonomous Robotic Solar Construction System

7 Reasons Robots Make a Huge Difference in Food Processing

Simbe Raises $28M Series B, Led by Eclipse, to Continue Transforming Retail Operations Through AI and Automation

CHIPOTLE PARTNERS WITH VEBU TO TEST AUTOCADO PROTOTYPE, A ROBOTIC SOLUTION TO GUACAMOLE PREP

Aigen Unveils the World's First AI-Driven, Solar-Powered, Agricultural Robotics Service

How Construction Companies Are Automating Workflows

Mori3 - Walking quadruped

Valmont Records Longest BVLOS Drone Flight on the Wings of T-Mobile 5G

Serve Unveils Commercial Deal with Uber to Enable Scaling of Robotic Delivery

AI Robots Rolling Out in Industry: Which Sectors Benefit Most?

OrionStar Robotics to Unveil Fully-Automated Restaurant Solution at NRA 2023

Records 46 to 60 of 547

First | Previous | Next | Last

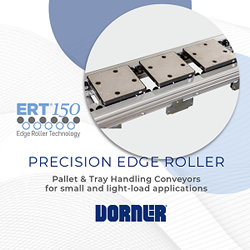

Featured Product