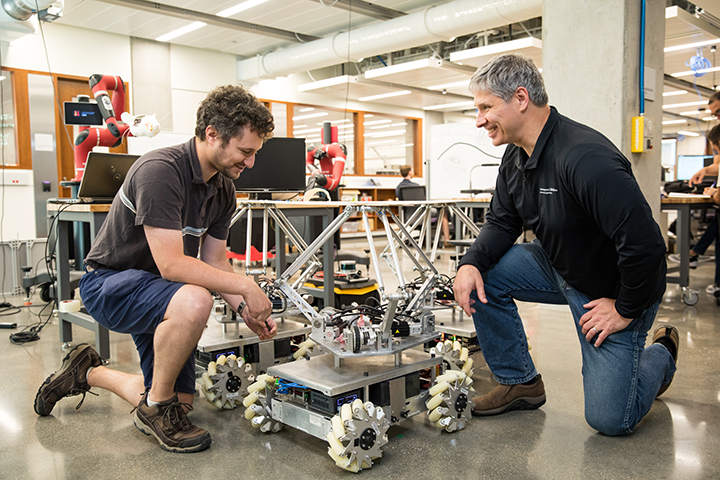

We are working toward the intimate physical integration of humans and robots. One example is robots that assist humans in collaborative physical tasks, like manipulating heavy objects in a warehouse.

The Center for Robotics and Biosystems at Northwestern

The Center for Robotics and Biosystems at Northwestern

Q&A with Kevin Lynch, Chair and Professor of Mechanical Engineering and Director | Center for Robotics and Biosystems at Northwestern

Please give me an overview of the new Center for Robotics and Biosystems at Northwestern.

The Center is a group of researchers, a space, and an idea. The idea is that the study of biological systems informs and accelerates our development of robot systems; that robots can be used to better understand biological systems; and that man will increasingly merge with machine. The space is a new 12,000 square foot facility that includes collaboration space, shared robots, and electromechanical prototyping facilities. The researchers come from several departments, including mechanical engineering, biomedical engineering, computer science, electrical and computer engineering, the medical school, and the Shirley Ryan AbilityLab. We have more than 20 core and affiliated faculty, and the core faculty includes me, Brenna Argall, Ed Colgate, Matt Elwin, Mitra Hartmann, Malcolm MacIver, Michael Peshkin, Mike Rubenstein, and Paul Umbanhowar.

How is it different from other university robotics centers?

Our work on human-robot systems is facilitated by our close collaboration with Northwestern’s Feinberg School of Medicine and the Shirley Ryan AbilityLab, which is based in Chicago and is the nation’s #1 research and clinical rehabilitation hospital.

What is the long-term vision for the center?

We are working toward the intimate physical integration of humans and robots. One example is robots that assist humans in collaborative physical tasks, like manipulating heavy objects in a warehouse. We call these collaborative robots “cobots,” a term that was coined in our lab in the mid 90s. Other examples include brain-machine interfaces, advanced prosthetics, neuroprosthetics (where implanted muscle stimulators activate muscles), powered exoskeletons, rehabilitation robots that assist in motor training after stroke, and smart wheelchairs that adapt their autonomy level according to the user’s impairment. Related work on human-robot interfaces includes haptic interfaces, which allow the user to feel virtual objects, and drone-assisted perception, where drones fly overhead to automatically fill in the gaps in a person’s visual field.

We believe robots will continue to be more tightly integrated in our everyday life, and our goal is a synergistic co-evolution of humans and robots.

You say that robots are collaborators, not competition. That is a comforting thought. What does it mean?

Many of the robots we are working on are designed to augment human abilities, as in the case of cobots; recover human abilities, as in the case of rehabilitation robots; or replace lost human abilities, as in the case of prosthetics. We view these robots as collaborators: their ultimate purpose is to assist humans, and often the robots by themselves are not fully autonomous---they require the connection to a human to be able to function.

There is no doubt that advanced robotics is, and will be, a disruptive technology that impacts the way we work. Some robots will compete with human labor and displace it. New employment opportunities will be created, but some transitions may be painful. I personally believe that public policy must evolve along with the technology, to help ensure that the benefits of robotics are shared.

I understand Northwestern has a long history of research and commercialization of robotics. Give some examples leading to the development of this new center.

The roots of Northwestern’s robotics work extend back to the 1950s, when Prof. Dick Hartenberg and his PhD student Jacques Denavit published the “Denavit-Hartenberg parameters,” or DH parameters, to concisely describe the kinematics of mechanisms. DH parameters have been enshrined in nearly every introductory robotics textbook ever written.

More recently, the invention of cobots in the mid-90s led to the formation of the company Cobotics, LLC, which focused on intelligent assist devices in warehouses and factories. Cobotics was acquired by Stanley Assembly Technologies in 2002.

Z-KAT, Inc., was a robotic surgery company built on Northwestern research. It eventually became MAKO Surgical Corp., which was acquired by Stryker in 2013.

The KineAssist robot, designed for rehabilitation of walking for subjects who have suffered a stroke, became the first product of the company Kinea Design. Kinea was acquired by HDT Global and is currently operating as HDT Robotics.

Technology developed in the lab for automated vibratory parts feeding was acquired by the Swiss company Asyril, which now sells a line of automated feeders for precision assembly tasks.

The latest spin-off is Tanvas, which makes “surface haptic displays” that use electroadhesion to create programmable tactile features and textures on the surface of a glass touchscreen.

What are examples of robotics inspired by biology?

The freshwater black ghost knifefish navigates in murky environments using a fascinating electric sense capability. It self-generates a time-varying electric field from its head to its tail, and it uses voltage sensors spread over its body to detect things within a body length. Anything with an electrical impedance different from water is picked up as a signal on the voltage sensors. The fish can interpret these signals as prey to be eaten or as a cave to hide in. We have built artificial versions of this sensory capability to augment long-range sensory modalities of underwater vehicles, like sonar. This short-range sensing is particularly relevant for maneuvering in tight spaces.

Another example is inspired by how some mammals, such as rats, can actively use their whiskers to learn the geometric and material properties of their environment. We are tracing signals passing through the full neural pathway, from sensors at the base of the whiskers, through neural processing in the brain, and back to muscles that drive the motion of the whiskers. We are also developing artificial whisker sensors as shape sensors for robot manipulators and as fluid flow sensors for underwater vehicles.

I understand that the Center for Robotics and Biosystems is active in robotics education innovation. Tell me about it.

The standard way to teach electronics is to lecture in the classroom and then hold a three-hour lab in a specialized facility, with oscilloscopes, function generators, multimeters, etc., where the students experiment with circuits. This approach is uninspiring: the long labs, which are fixed in time and space, suppress students’ natural enthusiasm and creativity.

Since all students now have laptops, we designed a small portable oscilloscope and function generator that interfaces with your laptop’s USB port. After a successful Kickstarter campaign, you can now buy it for less than $100, about the cost of a textbook, at nscope.org. Now students bring their electronics kits to class for real-time prototyping between mini-lectures, and they can work on their projects on their own schedule in their dorm rooms. This hardware and method of teaching have been adopted by other classes at Northwestern and by many other universities.

We also have hundreds of educational videos on our YouTube channel, on topics including system dynamics, electronics design, mechatronics, and robot kinematics, dynamics, motion planning, and control. We currently have six online courses running on Coursera, forming the Modern Robotics specialization. Thousands of learners around the world have enrolled in these courses.

About Kevin Lynch

Kevin Lynch is professor and chair of the mechanical engineering department at Northwestern University and director of the Center for Robotics and Biosystems. His research is in robotic manipulation, locomotion, human-robot systems, and robot swarms. He is the recipient of the IEEE Robotics and Automation Early Career Award, the Harashima Award for Innovative Technologies, the National Science Foundation CAREER award, and Northwestern’s Charles Deering McCormick Professorship of Teaching Excellence. He is editor-in-chief of the IEEE Transactions on Robotics, an alum of the DARPA/IDA Defense Science Study Group, co-author of textbooks on robotics and mechatronics, instructor of six Coursera online courses forming the “Modern Robotics” specialization, and an IEEE fellow. He received his BSE in electrical engineering from Princeton University and his PhD in robotics from Carnegie Mellon University.

The content & opinions in this article are the author’s and do not necessarily represent the views of RoboticsTomorrow

Featured Product