Jeannette and her team task the robot with tidying up a room: pick up all objects off the floor and put each object where it belongs. One of the key challenges when performing this task is determining the correct receptacle for each object.

Personalized Robot Assistance with TidyBot

Personalized Robot Assistance with TidyBot

Case Study from | Kinova Robotics

Personalized Room Tidying: Navigating Organizational Preferences

Jeannette Bohg, Assistant Professor at the Computer Science Department of Stanford University and director of the Interactive Perception and Robot Learning lab, has led, in collaboration with Princeton University, the development of a project focused on designing a robot for personalized household cleanup.

Jeannette and her team task the robot with tidying up a room: pick up all objects off the floor and put each object where it belongs. One of the key challenges when performing this task is determining the correct receptacle for each object. This is because home organization is highly personal, and different people have varying preferences for where objects should go. One person might prefer shirts in the drawer, another might want them on the shelf.

Automated Room Organization with TidyBot

Their approach is to collect example preferences from a user, and then use the summarizing capabilities of a large language model (LLM) to generalize those preferences. The user first provides a few examples of where specific objects go, and then an LLM converts the examples into more general rules that help determine placements for novel, unseen objects.

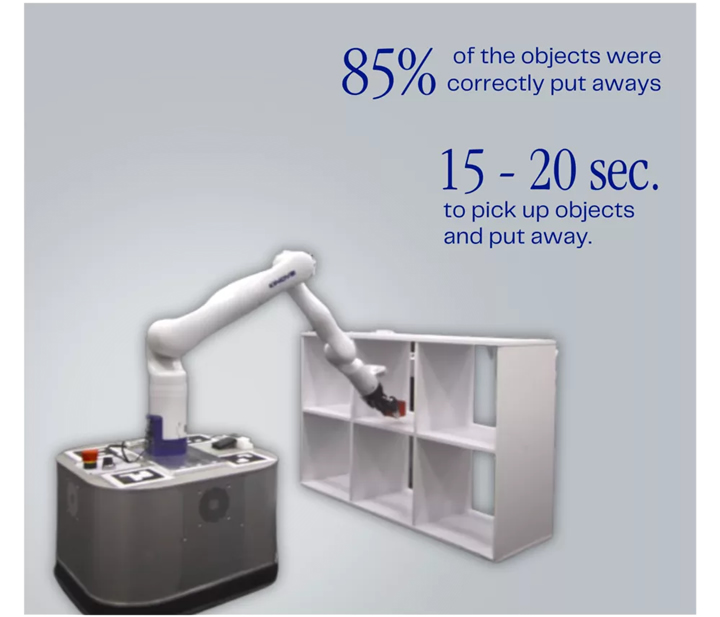

They deploy this approach on a real-world mobile manipulation system, which they call TidyBot. The mobile manipulator consists of a holonomic mobile base with a Kinova Gen3 arm mounted on top. The system operates by repeatedly locating the closest object, identifying it using an open-vocabulary image classifier, picking it up, and then putting it into its proper receptacle as specified by the generalized rules from the LLM.

Developing a Mobile Tidying Robot

The key objective was to develop a mobile robot capable of tidying up a room. This means picking up all objects off the floor and putting them into their proper place according to user preferences.

TidyBot in Action: Evaluating Real-World Performance

The resulting system, TidyBot, was evaluated in 8 real-world test scenarios, each with 10 objects and 2 to 5 receptacles. They contain objects that are commonly found in real homes, such as clothes, toys, and food items. Across all scenarios, they found that TidyBot can correctly put away 85% of objects. On average, each object took 15 to 20 seconds to pick up and put away.

The content & opinions in this article are the author’s and do not necessarily represent the views of RoboticsTomorrow

Comments (0)

This post does not have any comments. Be the first to leave a comment below.

Featured Product