The advances in genetics, brain research, artificial intelligence, bionics and nanotechnology are converging to accomplish the goal of overcoming human limitations and create higher forms of artificial intelligent life. By 2035, the robotics industry could rival the automobile and computer industries in both sales and jobs.

Len Calderone

Intelligence is the capacity for learning, reasoning, understanding, and similar forms of mental activity, while artificial intelligence (AI) is the capacity of a computer to perform operations analogous to learning and decision making in humans.

To accomplish everyday tasks robots have to have substantial knowledge of the objects, with which they will interact; the environment in which they will function; and results of the actions they will perform. For a robot to make competent decisions, it must have encyclopedic knowledge of the the procedures it must take and the properties of the objects it must manipulate.

IBM’s Watson computer won the game of Jeopardy! Watson’s predecessor was IBM’s Deep Blue, which won against chess master Gary Kasparov. At the heart of Watson is the advanced natural language processing system, which can deal with the nuisances of our colloquial, idiomatic language and understand the metaphoric, analogical, and other quirks of how we speak, but more importantly the key to Watson is its ability to learn.

Watson will take the medical board exams by asking and answering medical questions with the same learning improvements that it used in its rise to Jeopardy fame.

Watson can sift through an equivalent of about 1 million books or roughly 200 million pages of data, and analyze this information and provide precise responses in less than three seconds. Watson is now digesting all of the medical books in relationship to cancer, so that it can assist doctors in the diagnosis and treatment of different cancers. Because a computer never has a memory glitch or looses information, it is the perfect companion to the oncologist, who must analyze many factors during a patient’s treatment. Doctors plan to use Watson’s analytic capabilities to consider all of the prior cases, the state-of-the-art clinical knowledge in the medical literature and clinical best practices to help a physician make a diagnosis and proceed with a course of treatment.

As an example--to diagnose bladder cancer, Stanford University researchers developed an algorithm using the characteristics of two molecules normally found in healthy bladder cells. For the diagnosis, the computer works backwards, checking thousands of databases of proteins to find a correlation with a molecule that was not a match, meaning there was a bladder cancer.

In the financial arena, Citibank is constantly developing new, innovative ways to better serve their customers' financial needs, so the bank has entered into an agreement with IBM to use Watson to provide their customers with the latest secure services designed around the customer’s increasingly digital and mobile lives. Watson's ability to analyze the meaning and context of human language and quickly process vast amounts of information to suggest options targeted to a consumer's individual circumstances can help accelerate and assist decision-makers in identifying opportunities, evaluating risks and exploring alternative actions that are best suited for the bank’s clients. Imagine Watson playing the stock market.

AI does not try to recreate the human brain, but instead uses techniques like computer learning, massive knowledge bases, and clever algorithms to solve specific problems. Picture an AI robot in a retail store, or in the customer service department, which can answer customers’ questions on any product in the store or solve any service trouble.

The partner ballroom dancing robot is a klutz proof robot.

In Japan, robots have been taught to dance by instructors demonstrating the dance moves, which the robots duplicate. A learning robot can recognize that if it makes a certain move, such as a dance step, it will achieve a desired result. By storing this information, the robot can make the same move when it encounters the same situation in the future.

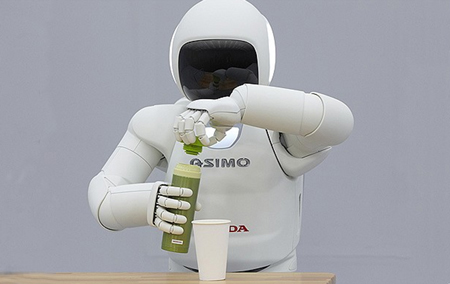

Last year, Honda unveiled the all new ASIMO robot – one that can run, walk across bumpy surfaces, hop, and even pour coffee. It could even identify different voices speaking at the same time and respond to different instructions from each one. It even used a bit of sign language, saying “My name is ASIMO,” with its fingers.

ASIMO continuously assesses the input from multiple sensors, predicts the situation and then determines its activities, which means that ASIMO is now able to respond to the movement of people and surrounding situations, while autonomously detecting and avoiding obstacles while walking down a busy office corridor. If ASIMO is about to collide with a person, it will stop and move out of the way. This technology also enables ASIMO to recognize faces and voices, allowing it to interact more with its surroundings and gently holding objects with its fingers. It has the ability to grab a water bottle, unscrew the top and pour the drink into a cup. All I want to know is, “ASIMO, where is my coffee?”

Simple autonomous robots use infrared or ultrasound sensors to see obstruction. These sensors work the same way as bats using echolocation. The robot sends out a sound signal or a beam of infrared light and detects the signal's reflection. The robot locates the distance to obstacles based on how long it takes the signal to bounce back.

Advanced robots use stereo vision to see the scene around them. Two cameras give these robots depth perception, while image-recognition software gives them the capability to detect and catalog various objects. Autonomous robots might also use microphones to listen to their surroundings and employ smell sensors to detect scents.

Quadrotors are amazing autonomous flying robots which have incredible maneuverability, speed and ability to fly in swarms. These micro aerial vehicles (MAV) are capable of autonomous navigation in GPS-denied environments, 3D mapping, and radio-beacon localization. The MAV's are fully autonomous with the flight control accomplished by computer vision with onboard cameras, which run entirely on their own with no laser rangefinder or GPS used.

In the near future, MAVs will play a key role in performing tasks, such as search and rescue, inspection, and environmental monitoring. You can throw a Quadrotor into the air and have it automatically re-orient itself and hover, which is useful for deployment in less than stable conditions. A swarm of MAVs can fly in formation, maintaining perfect distance from each other, as well as modify their positions so as to navigate around obstacles.

In the near future, MAVs will play a key role in performing tasks, such as search and rescue, inspection, and environmental monitoring. You can throw a Quadrotor into the air and have it automatically re-orient itself and hover, which is useful for deployment in less than stable conditions. A swarm of MAVs can fly in formation, maintaining perfect distance from each other, as well as modify their positions so as to navigate around obstacles.

So, where are AI and the autonomous robot going? IBM’s Watson used 2,880 processing cores to win at Jeopardy! in 2011. The power of computers has grown about 1.5 times a year, opening the way for the equivalent power of Watson to be included in your home PC in the next 10+ years.

There is progress being made on the thought process side of AI, but it will take robotics to make an AI robot, which can operate in the real world. Most of the robots in use today are industrial robots employed in manufacturing for welding, painting and production. The next step is to combine the “brain” power of Watson with the mobility of ASIMO to create a truly functioning humanoid. The next generation of robots will be able to interact with humans on a complicated level, making decisions, and helping with everyday chores.

The advances in genetics, brain research, artificial intelligence, bionics and nanotechnology are converging to accomplish the goal of overcoming human limitations and create higher forms of artificial intelligent life. By 2035, the robotics industry could rival the automobile and computer industries in both sales and jobs.

So, what will you say to an intelligent robot?

For further information:

- Knowledge Processing for Autonomous Robot Control, Department of Informatics,Technische Universit at Munchen

- Book by Stephen Baker: Final Jeopardy, Man vs. Machine and the Quest to Know Everything (Houghton Mifflin Harcourt, 2011)

- http://www.sciencedirect.com/science/article/pii/0921889095000542

- http://techland.time.com/2011/11/16/how-hondas-asimo-became-the-running-drink-serving-autonomous-robot-it-is-today/#ixzz1xb2vclbo

- http://www.autonomousrobotsblog.com/

The content & opinions in this article are the author’s and do not necessarily represent the views of RoboticsTomorrow

Comments (0)

This post does not have any comments. Be the first to leave a comment below.

Featured Product