How Open-Source Robotics Hardware Is Accelerating Research and Innovation

America may miss out on the next industrial revolution

New Study Shows U.S. is World Leader in Robotics Automation - With $732 Billion of Robots

ABB sells its first ever robot manufactured in the US to Hitachi Powdered Metals USA

Grocery 4.0: Ocado reshapes retail with robotics and automation

Miso Robotics Unveils "Flippy" in CaliBurger Kitchen, Plans Worldwide Rollout

Rise of the Robots

Brain-controlled robots

Table tennis playing robot breaks world record - Japan Tour

ST Robotics Offers New Super-Fast Robot Arm

Rise of Robots: Boon for Companies, Tax Headache for Lawmakers

Raspberry Pi-powered arm: This kit aims to make robotics simple enough for kids

Rethink Robotics Releases Intera 5: A New Approach to Automation

Robotics-focused ETFs see big gains, Trump could hasten trend

Microbots: Microsoft's multi-pronged robotics play takes shape

Records 406 to 420 of 920

First | Previous | Next | Last

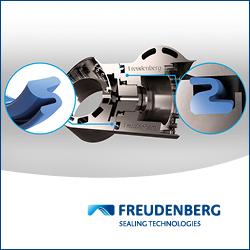

Industrial Robotics - Featured Product